Karpenter – Simplify Autoscaling for Amazon EKS

- Nitin Yadav

- Blog

About

Industries

- Auto Scaling, AWS, EKS, Kubernetes, Terraform

Share Via

Introduction

Kubernetes has become the de facto standard for container orchestration, enabling developers to manage and deploy containerized applications at scale. Amazon’s Elastic Kubernetes Service (EKS) is a fully managed Kubernetes service that makes it easy to run Kubernetes on AWS. However, as applications grow, managing resources effectively can become a challenge. That’s where Karpenter comes in.

What is Karpenter?

Karpenter is a just-in-time capacity provisioner for any Kubernetes cluster, not just EKS. It is designed to simplify and optimize resource management in Kubernetes clusters by intelligently provisioning the right resources based on workload requirements. Although Karpenter can be used with any Kubernetes cluster, in this article, we will focus on its benefits and features within the context of AWS and EKS.

Advantages of Karpenter over Cluster Autoscaler

EKS best practices recommend using Karpenter over Autoscaling Groups, Managed Node Groups, and Kubernetes Cluster Autoscaler in most cases. Here’s why Karpenter has the edge:

- No need for pre-defined node groups: Unlike Cluster Autoscaler, Karpenter eliminates the need to create dozens of node groups, decide on instance types, and plan efficient container packing before deploying workloads to EKS.

- Decoupled from AWS APIs and EKS versions: Karpenter is not tied to specific EKS versions, providing greater flexibility and easier upgrades.

- Adapts to changing workload requirements: With Karpenter, there’s no need to update node group specifications if workload requirements change, resulting in less operational overhead.

- Intelligent instance selection: Karpenter can be configured to control which EC2 instance types are provisioned. It then intelligently chooses the correct instance type based on the workload requirements and available compute capacity in the cluster.

Key Features of Karpenter Karpenter offers several powerful features that set it apart from traditional autoscaling solutions:

- Native support for Spot instances: Karpenter can handle provisioning and interruptions of Spot instances, maintaining application availability while reducing costs.

- Automatic node replacement with Time-to-live (TTL): Karpenter can automatically replace worker nodes by defining a TTL, ensuring cleaner nodes with less accumulated logs, temporary files, and caches.

- Advanced node provisioning logic: Karpenter allows for defining advanced node provisioning logic using simplified YAML definitions, making it easier to manage complex configurations.

The Cost Factor

Karpenter is a powerful tool that optimizes resource usage in Kubernetes clusters, resulting in significant cost savings for users. By assessing the resource requirements of pending pods, Karpenter intelligently selects the most suitable instance type for running them, ensuring efficient resource utilization. Furthermore, this smart solution can automatically scale-in or terminate instances no longer in use, reducing waste and lowering costs.

In addition to these capabilities, Karpenter offers a unique consolidation feature that intelligently reorganizes pods and either deletes or replaces nodes with more cost-effective alternatives to further optimize cluster costs. This proactive approach to cost management ensures users get the most value from their Kubernetes clusters while minimizing waste and maximizing efficiency. With Karpenter, users can trust that their clusters are optimized for performance, scalability, and cost-effectiveness.

Get started with Karpenter

With so many reasons to obvious reasons to use karpenter, let’s give it a try while you figure out your favourite use-case.

Installing Karpenter on EKS

Karpenter deploys as any other component in a kubernetes cluster. Helm charts are published by AWS to simplify the installation process. Here is a step-by-step guide to install karpeneter on any running EKS Cluster and and test it on a sample application workload.

As a Pre-requisite, you need access to cluster using kubectl and helm, alongwith access to AWS Account using AWS CLI ( to configure IAM roles and related settings )

First create an IAM role that will be associated to the worker nodes , provisioned by Karpenter. This Allows worker nodes to attach to EKS cluster and add to Available capacity. All the required IAM policies for this role can be added with these steps

Take note of the IAM instance profile ARN , this will be passed to karpenter helm config for installation. Create a custom values.yaml file, replace cluster_name, cluster_endpoint and node_iam_instance_profile_arn (from above step)

Next, install karpenter to your EKS cluster, while passing above values.yaml file as an argument to helm install command

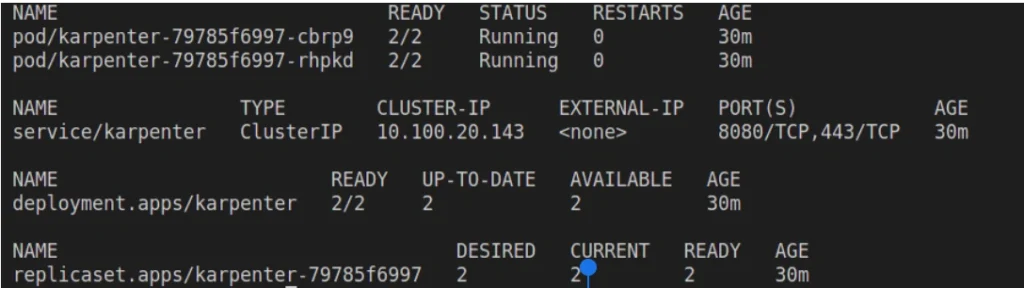

Verify that karpenter is deployed successfully

kubectl get all -n karpenter

Configuring Karpenter as node provisioner

Get the name of Kubernetes service account created by Karpenteraws eks describe-cluster --name dev-skaf --query "cluster.identity.oidc.issuer" --output text

Replacekubectl get serviceaccount -n karpenter

OIDC_PROVIDER_ARN and KARPENTER_SA_NAME with the values fetched in above commands. Note the role ARN from command output. Create an IAM policy with required permissions and note policy ARN from output.

Attach the IAM policy to the role, and annotate karpenter service account with ARN of the role. ( Replace arn_of_karpenter_irsa_policy and arn_of_karpenter_irsa_role with noted values )

Now Karpenter is ready with the required IAM permissions to creaet worker nodes, but it still needs the node provisioning loginc an dhence let’s define the Karpenter Provisioner configuration.

Here you again need to specify EKS Cluster name and Subnet Selector for the Nodes . Replace cluster_name and private_subnet_name with correct values. Create a file and use kubectl apply -f <filename>command to apply this provisioner configuration

Using Karpenter to create worker nodes

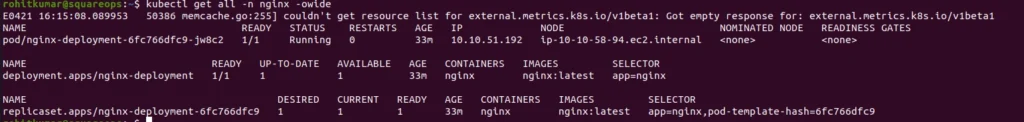

Now when Karpenter is all set for action, let’s see how it can be harnessed to add capacity to EKS cluster , on demand and based on workload requirement. For this example, we create a simple nginx deployment , define the requirements and see how karpenter chooses the right ec2 instance for worker node

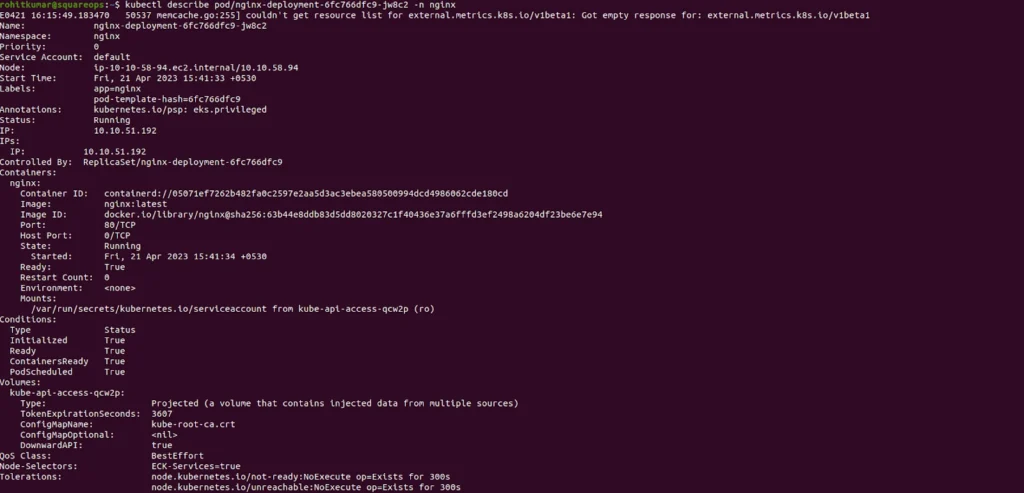

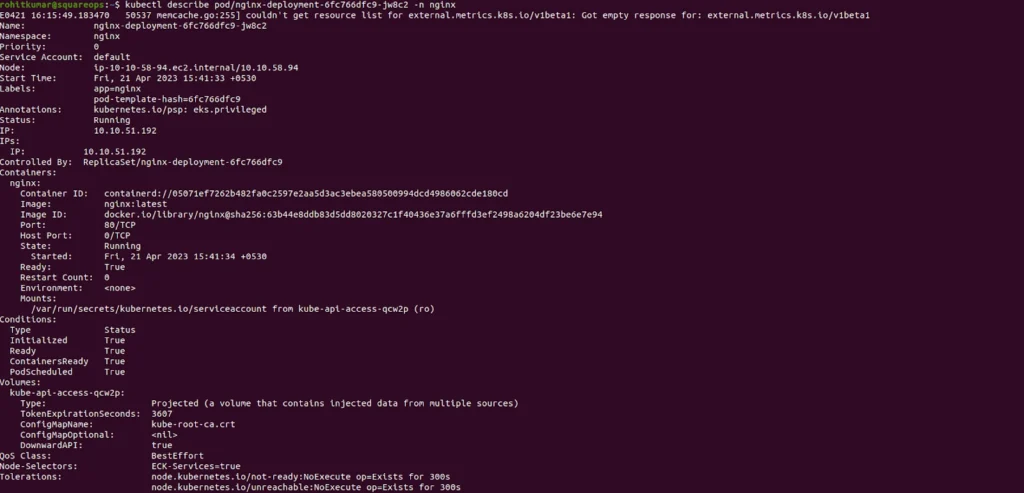

Verify the deployment , see that pod is running and a new node is provisioned.

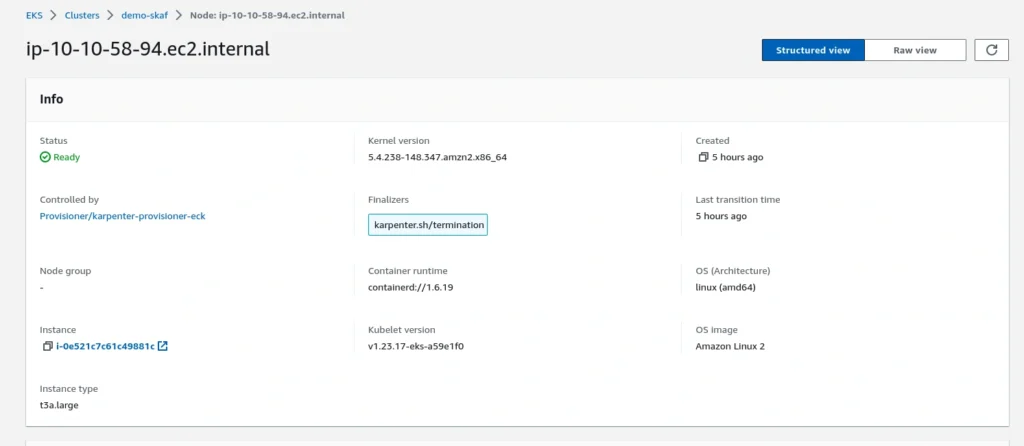

Get the node IP and verify it on AWS Console

Advanced use cases with Karpenter

Karpenter understands many available scheduling constraint definitions in kubernetes by default, including

- resource requests and node selection

- node affinity and pod affinity/anti-affinity

- topology spread

Karpenter can detect availability zone of Kubernetes Persistent volumes backed by Amazon EBS and launch the worker nodes accordingly to avoid cross-AZ mounting issues with EBS storage class.

Conclusion

We just witnessed how karpenter can create and optimized EC2 capacity on demand for your EKS clusters. Installing and configuring karpeneter may seem like a tak but our terraform module for EKS Bootstrap adds such useful drivers to your eks cluster seamlessly.

Frequently asked questions

Karpenter is an open-source Kubernetes cluster autoscaler developed by AWS that simplifies the process of scaling Amazon EKS clusters. It automatically provisions and scales EC2 instances based on the real-time resource requirements of your workloads. This helps eliminate the complexities of manual capacity planning and scaling, making Kubernetes clusters more cost-efficient and responsive to changing demand.

Karpenter continuously monitors the EKS cluster for unscheduled pods and automatically provisions the appropriate EC2 instances to meet the resource needs of those pods. It dynamically calculates the optimal instance types, sizes, and the quantity of instances required, ensuring that resources are provisioned only when necessary and efficiently scaled back when demand decreases.

Karpenter provides significant benefits by automating resource scaling and reducing both over-provisioning and under-provisioning. It improves cost efficiency by right-sizing instances, leverages spot instances for lower costs, and enhances application performance by ensuring that the right resources are available at the right time. Additionally, it simplifies cluster management by removing the need for manual capacity planning.

Yes, Karpenter supports a wide array of EC2 instance types, including both on-demand and spot instances. It can provision instances from a variety of families (such as M, C, R, and T series) depending on the workload’s requirements, making it flexible and cost-efficient. Karpenter also supports advanced features like GPU and ARM-based instances for specialized workloads.

No, Karpenter eliminates the need for manual instance management. It automatically scales your cluster based on the resource requests and limits defined in your Kubernetes workloads. This means you don’t need to manually configure or adjust the cluster size – Karpenter takes care of provisioning and de-provisioning instances as needed.

Yes, Karpenter can scale resources based on both CPU and memory requirements, as well as other resource types, such as GPU. It dynamically adjusts the instance type and size to match the specific demands of your pods, ensuring optimal performance while minimizing costs. This flexibility allows Karpenter to efficiently scale clusters in response to diverse workloads.

Karpenter integrates seamlessly with Kubernetes’ Horizontal Pod Autoscaler (HPA). While HPA adjusts the number of replicas of a pod based on metrics like CPU and memory usage, Karpenter adjusts the underlying infrastructure by provisioning new EC2 instances to ensure that resources are available for the newly scheduled pods. This combined approach optimizes both pod and infrastructure scaling.

Karpenter optimizes costs by provisioning only the necessary EC2 instances based on the actual resource needs of the workloads. It can use EC2 Spot instances for even greater cost savings and dynamically scale instances up or down based on real-time demand. By removing the need for over-provisioning and scaling resources efficiently, Karpenter helps reduce wasted capacity and cut infrastructure costs.

Yes, Karpenter is designed for simplicity and ease of use. It requires minimal configuration to deploy on an EKS cluster, and the autoscaling functionality is automated once it is installed. It integrates with the existing Kubernetes cluster and works out-of-the-box with the Kubernetes scheduler. While some advanced configurations may be necessary for specific use cases, the default setup is straightforward for most applications.

Absolutely. One of Karpenter’s key features is its ability to scale down unused resources to save on costs. When the demand for resources decreases (e.g., when pods are terminated or scaled down), Karpenter will automatically identify and terminate excess EC2 instances. This ensures that your infrastructure only consumes resources when needed, optimizing cost efficiency and ensuring that you’re not paying for idle capacity.

Related Posts

Comprehensive Guide to HTTP Errors in DevOps: Causes, Scenarios, and Troubleshooting Steps

- Blog

Trivy: The Ultimate Open-Source Tool for Container Vulnerability Scanning and SBOM Generation

- Blog

Prometheus and Grafana Explained: Monitoring and Visualizing Kubernetes Metrics Like a Pro

- Blog

CI/CD Pipeline Failures Explained: Key Debugging Techniques to Resolve Build and Deployment Issues

- Blog

DevSecOps in Action: A Complete Guide to Secure CI/CD Workflows

- Blog

AWS WAF Explained: Protect Your APIs with Smart Rate Limiting

- Blog