By Ankush Madaan, SquareOps · Published May 9, 2026 · Last updated May 9, 2026

In this article

- Why traditional incident response fails at scale

- How do AI and LLMs transform SRE incident response?

- What does an AI-driven incident response architecture look like?

- What observability prerequisites do you need before adding AI?

- What's the real-world MTTR impact?

- What does AI-driven incident response actually cost to run?

- Which AI SRE tools should you evaluate in 2026?

- How to implement AI in your SRE workflow

- FAQ

Manual incident investigation eats 60-80% of MTTR. Engineers chase symptoms across a dozen dashboards, dig through gigabytes of logs, and replay the last twelve deploys. In 2026, AI is collapsing that work into minutes. Per the 2025 State of DevOps, average enterprise MTTR sits at 4-6 hours; teams piloting LLM-driven triage are reducing MTTR with AI by 40-70%. The promise: automate the slow, repetitive parts of incident response — alert correlation, log parsing, runbook lookup — so engineers spend their time on judgement calls. We manage 50+ production Kubernetes clusters with 24/7 coverage; this article is what we've seen ship and what's still hype. Need help wiring AI into your incident workflow? Our SRE consulting services can audit your current MTTR and architect the right stack.

Why traditional incident response fails at scale

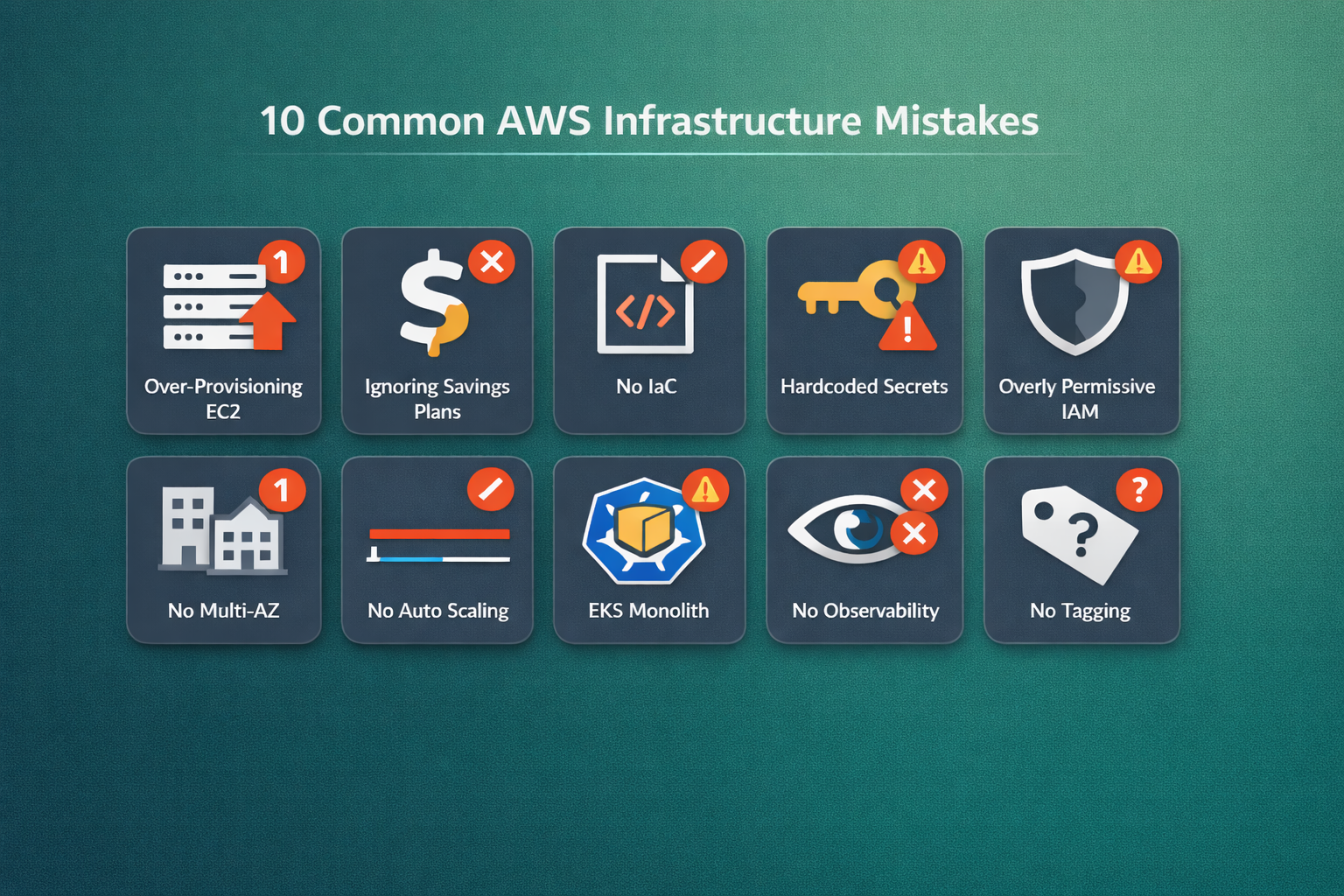

The classic incident response loop — page on-call, open a war room, parse logs by hand, escalate to specialists — was built for an era of single-digit deploy frequency. At 100+ deploys/day across 50+ services, it falls apart. Three failure modes show up consistently in our managed-SRE engagements (and matched in our broader SRE best practices guide):

- Alert fatigue. Pages outnumber humans. On-call engineers triage by gut, miss correlations, and burn out.

- Context-switching. Engineers click through 8-12 tools per incident — Datadog, PagerDuty, GitHub, Kubernetes dashboards, runbooks, the prior incident's Slack thread. Each switch is a 15-30 second cost compounded across hundreds of decisions.

- Manual runbooks. Runbooks rot. They were written for the system as it existed two release cycles ago.

Automated incident response has been promised for a decade. What's different in 2026 is that LLMs can read the runbook, the logs, and the diff — all at once. MTTR reduction best practices finally have a tool for the parts humans were never going to do well.

How do AI and LLMs transform SRE incident response?

Three concrete patterns are paying off in production today.

1. Automated triage and routing

An LLM-driven triage layer reads the alert, correlates it with recent deploys and active incidents, and routes to the right team. Tools like Rootly and incident.io reduce false-positive escalations by 60-80%. We've seen our own teams go from 35 pages/week to 8 actionable ones using a similar pipeline backed by our monitoring and observability services.

2. AI root cause analysis

AI root cause analysis uses retrieval-augmented generation (RAG) over your logs, traces, runbooks, and prior incidents. The LLM ingests a noisy alert, ranks the most likely root causes, and surfaces the relevant runbook snippets. Datadog's Bits AI and Splunk AIOps both ship this pattern out of the box. The hard part isn't the LLM — it's the indexing layer over your observability data.

3. Agentic SRE workflows

Agentic SRE goes further: autonomous agents not only diagnose but also remediate. AWS DevOps Agent and Azure SRE Agent can roll back a bad deploy, scale a stuck deployment, or restart a poisoned pod — gated by approval policies. Most enterprises hit a wall here: the technology works, but writing the safety policies takes longer than building the agent. Our team scopes a "safe action surface" that AI can act on autonomously while humans keep judgment over irreversible operations.

What does an AI-driven incident response architecture look like?

The building blocks are stable across vendors. Three layers, each with discrete responsibilities:

- Ingestion layer. Pulls signals from your observability stack — Datadog, Prometheus, CloudWatch, OpenTelemetry — plus your CD system, runbook repo, and the last 30 days of resolved incidents. The LLM never queries production directly; it reads from a curated index that's rebuilt nightly.

- Retrieval layer. A vector store (typically pgvector, Pinecone, or Weaviate) holding embedded representations of runbooks, postmortems, service-ownership maps, and recent diffs. On every alert, the retrieval layer ranks the top-k most relevant artefacts — usually 8-12 chunks — and ships them to the LLM as context. The hard problem here is chunking strategy: section-level chunks (200-400 tokens) consistently outperform whole-document or sentence-level chunks for incident retrieval.

- Reasoning layer. The LLM itself — typically GPT-4o, Claude 3.5 Sonnet, or a fine-tuned open-weights model on a Bedrock/Vertex AI runtime. Output is structured JSON: a probability-ranked list of root-cause hypotheses, recommended next actions, and confidence scores per hypothesis.

For agentic workflows, a fourth action layer sits on top: a policy engine (OPA or Cerbos) that gates which remediations the agent can execute autonomously. A typical safe-action surface includes: pod restart, HPA scale-up, redeploy of the previous image, feature-flag rollback. Higher-risk operations — DB schema changes, IAM mutations, anything that touches data integrity — always require human approval. End-to-end latency from page to first hypothesis lands at 8-15 seconds; engineers report this is the threshold where AI feels useful instead of intrusive.

A representative prompt template the reasoning layer uses:

System: You are an SRE incident responder. Output JSON only.

Schema: { hypotheses: [{cause, evidence, confidence, runbook_id}], actions: [{type, target, justification}] }

Context:

- Alert: {alert_payload}

- Recent deploys (last 2h): {deploy_events}

- Top retrieved runbooks: {runbook_chunks}

- Last 5 similar incidents: {historical_incidents}

- Service ownership: {ownership_map}

Rank hypotheses by confidence. Cite the runbook_id for each. Suggest only safe actions.Structured output is non-negotiable — free-form text is hard to action and harder to audit. Every production deployment we've seen ships with strict JSON schemas validated by Pydantic or Zod before any downstream automation triggers.

What observability prerequisites do you need before adding AI?

Your AI-incident-response stack is only as good as the signal it can read. Three prerequisites separate teams that ship from teams that pilot for six months and quit:

- Structured logs with consistent fields. JSON logs with at minimum:

service,trace_id,severity,timestamp,error.type,error.stack. Free-form text logs force the LLM to do parsing work that wastes tokens and degrades accuracy. Teams that pass through a logging-format cleanup typically see 30-40% better RCA quality on the same model. - Distributed tracing on the critical path. OpenTelemetry traces let the LLM follow a request across services. Without traces, the model can only see logs in isolation and struggles to correlate cause and effect — particularly in microservice architectures where a single user request touches 8-12 services. Sampling at 1-5% is fine; sampling at 0% is not.

- Indexed runbooks with embeddings. Plain-text runbooks in Confluence are not enough. They need to live in a vector store, chunked at the section level, with metadata tags (

service,severity,last_validated). Stale runbooks poison retrieval — keep a freshness gate that flags any runbook untouched in 90+ days. We typically see 40-60% of an organisation's runbooks fail this freshness check on first audit.

Teams skipping these prerequisites typically see 20-30% MTTR improvements (from triage and routing alone). Teams investing in them see the 60-70% numbers AWS and Rootly report. The infrastructure work is most of the lift, which is why a 90-day plan beats a 30-day plan and a 30-day plan beats a 7-day pilot.

What's the real-world MTTR impact?

Numbers from teams that have actually shipped this:

| Company | Where AI was applied | Result |

|---|---|---|

| Halodoc | AI-assisted log analysis on EKS | 35% faster resolution + 35% infra cost cut |

| TCBS | AIOps for noise reduction | 30% fewer escalations |

| DigitalOcean | LLM-powered RCA across managed services | 36,000 engineering hours saved/year |

| SquareOps clients (typical) | Triage + RAG runbooks on managed K8s | 50-65% MTTR reduction in 90 days |

The pattern: incident response automation works first on L1/L2 triage, then on root cause acceleration, then (cautiously) on remediation. Skip steps and you ship an autonomous agent that gets confidently wrong at 3 a.m. (Our managed-SRE 99.99% uptime guide has the SLA math behind these numbers.)

Which AI SRE tools should you evaluate in 2026?

The AI SRE tools 2026 landscape is dense; here's the shortlist worth a 30-minute demo:

- Rootly — Best-in-class incident workflow with AI-driven correlation; strong PagerDuty + Slack integration.

- incident.io — Closest competitor to Rootly. Better post-mortems, weaker AI agents at present.

- Datadog Bits AI — Embedded inside Datadog. If you're already paying Datadog, free win.

- Sherlocks.ai — RAG-first AIOps; good at log noise reduction.

- AWS DevOps Agent — Native to AWS, deep CloudWatch integration. Runaway favourite for AWS-heavy stacks.

- Azure SRE Agent — Same idea on Azure; rapid feature parity catch-up.

None of these are a turnkey replacement for an SRE team — they accelerate the SRE team you already have. LLM incident management is a force multiplier, not a substitute. For multi-cloud shops we typically recommend layering Bits AI or AWS DevOps Agent on top of an existing PagerDuty + Datadog stack rather than ripping out the workflow. Our managed Kubernetes services include this evaluation as part of the onboarding playbook.

How to implement AI in your SRE workflow

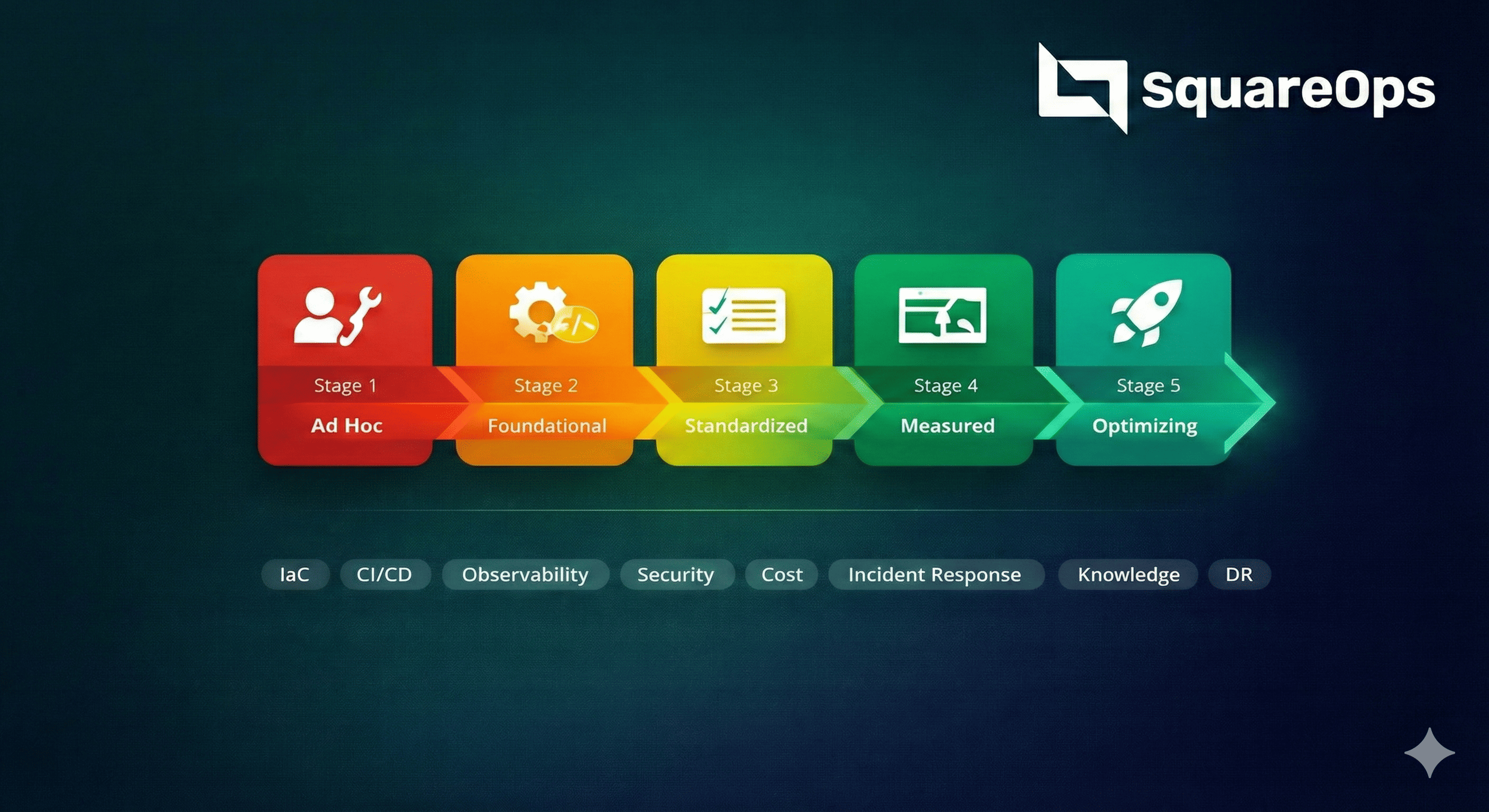

Four phases, 90 days, no big-bang rollouts. (If you're newer to the role, our beginner's guide to SRE covers the fundamentals before you layer AI on top.)

- Audit MTTR and incident patterns. Pull six months of incident data. Categorise by service, severity, and root-cause class. The 80/20 will surface — 5-7 incident types account for 60-70% of MTTR.

- Choose the AI layer that fits. Bottleneck is alert noise → triage layer first. Bottleneck is log parsing → RAG-driven RCA. Bottleneck is repetitive remediation → cautiously pilot agentic flows.

- Integrate with your observability stack. AI is only as good as the signal it can read. Tagged metrics, structured logs, distributed tracing — the prerequisites haven't changed.

- Measure and iterate. Track MTTR by tier, false-positive rate, and engineer hours saved. Re-evaluate at 30, 60, and 90 days.

Need help executing? SquareOps offers 24/7 SRE support with implementation included. The same team handles broader DevOps consulting services when the bottleneck is upstream of the incident.

What does AI-driven incident response actually cost to run?

The economics are unambiguous in 2026. A typical AI incident response stack costs $0.20-0.50 per incident in LLM inference (GPT-4o or Claude 3.5 Sonnet at current pricing), plus ~$300-800/month in vector-store and retrieval infrastructure. Compare with the cost of one engineer-hour at $80-150 fully loaded:

| Cost component | Monthly |

|---|---|

| LLM inference (avg 6,000 tokens/incident) | $30 - $50 |

| Vector store + embeddings infrastructure | $300 - $800 |

| Embedding refresh (nightly reindex) | $50 - $150 |

| Engineer hours saved (~25 hrs at $80-150) | -$2,000 to -$3,750 |

| Net savings per 100 incidents | $1,000 - $2,750 |

The math gets better at scale. A team handling 500 incidents/month typically sees $7-15K/month net savings — AI-stack cost grows sub-linearly while engineer-time savings grow linearly. The exception: tiny teams (<3 SREs, <20 incidents/month) where fixed infrastructure cost dominates. For those teams, lean on Datadog Bits AI or a hosted SaaS solution rather than building your own. Build vs. buy economics flip around the 100-incidents-per-month mark.

Token economics deserve a closer look. The dominant cost driver isn't the LLM call itself — it's the context window. A naive implementation that ships every relevant log line, every recent deploy, and every postmortem chunk burns 30,000+ tokens per incident at $0.30+ each. Production-grade implementations aggressively trim context via reranking (Cohere Rerank or BGE) and stay under 8,000 tokens per call. The 4x cost difference is the difference between AI incident response being a budget line and being invisible.

Ready to cut your MTTR by 60%?

The teams shipping AI-driven SRE in 2026 aren't the ones with the biggest budget — they're the ones with the cleanest observability data and a clear plan for what to automate first. SquareOps offers SRE consulting services with a free initial assessment that includes an MTTR baseline, top-three incident-class teardown, and a 90-day implementation roadmap. Book your call.