What is MLOps?

SquareOps provides MLOps consulting and AI infrastructure services to help organizations move machine learning models from experimentation to production. We build the pipelines, compute infrastructure, and operational workflows that bridge the gap between data science teams and production systems — so your models deliver value, not just predictions in a notebook.

MLOps (Machine Learning Operations) applies DevOps principles to the ML lifecycle: data preparation, model training, deployment, monitoring, and retraining. Without MLOps, organizations face a common bottleneck — data scientists build promising models, but lack the infrastructure to deploy, scale, and maintain them in production. Our Kubernetes-native approach provides repeatable, scalable ML infrastructure that runs on AWS, GCP, or Azure.

Whether you need to deploy a single recommendation model or operate a fleet of LLMs with GPU clusters, our ML platform engineers design infrastructure that scales with your AI ambitions while keeping compute costs under control.

MLOps Consulting Services We Provide

End-to-end machine learning operations — from data pipelines and experiment tracking to model serving and GPU cost optimization.

ML Pipeline Automation

Orchestrate end-to-end ML workflows with Kubeflow Pipelines, MLflow, Ray Train, or Airflow — from data ingestion and feature engineering to model training, validation, and registry.

Model Deployment & Serving

Production-grade model serving with KServe, Triton Inference Server, Ray Serve, or TorchServe. A/B testing, canary rollouts, autoscaling, and multi-model endpoints on Kubernetes.

GPU Infrastructure & Orchestration

Provision and manage GPU clusters with NVIDIA GPU Operator, Karpenter for GPU node autoscaling, spot instance strategies, and GPU time-slicing for cost-efficient AI compute.

Model Monitoring & Observability

Track model performance, detect data drift and concept drift, monitor prediction quality, and trigger automated retraining with Prometheus, Grafana, and Evidently AI.

LLMOps & GenAI Infrastructure

Deploy and scale large language models with vLLM and TGI. RAG pipeline setup with vector databases (Weaviate, pgvector), fine-tuning infrastructure, and prompt management.

Feature Store & Data Pipelines

Build feature stores with Feast, real-time and batch data pipelines, data versioning with DVC, and reproducible training datasets for consistent model performance.

MLOps vs DevOps vs DataOps

DevOps

Automates the software delivery lifecycle — building, testing, and deploying deterministic code. Handles CI/CD pipelines, infrastructure as code, and deployment workflows.

Core Question

"How do we ship code faster and more reliably?"

MLOps

Extends DevOps for ML: versioning datasets and models, managing GPU compute, tracking experiments, monitoring model drift, and automating retraining loops for probabilistic systems.

Core Question

"How do we deploy and maintain ML models in production?"

DataOps

Focuses on data quality, data pipelines, and data governance. Ensures reliable, timely data flows from sources to consumers — whether those consumers are dashboards, APIs, or ML models.

Core Question

"How do we deliver trustworthy data faster?"

MLOps requires DevOps as a foundation. At SquareOps, we provide both — so your ML infrastructure is built on production-grade pipelines, not research prototypes.

MLOps Platform Comparison

Choosing the right MLOps platform depends on your team size, cloud strategy, and vendor lock-in tolerance.

| Feature | Kubeflow | MLflow | SageMaker | Vertex AI |

|---|---|---|---|---|

| Open Source | Yes (CNCF) | Yes (Linux Foundation) | No | No |

| Vendor Lock-in | None | None | AWS only | GCP only |

| GPU Support | Native K8s GPU | Via infrastructure | Managed instances | Managed instances |

| Pipeline Orchestration | Built-in (Argo-based) | Basic (MLflow Pipelines) | SageMaker Pipelines | Vertex Pipelines |

| Model Registry | Third-party | Built-in | Built-in | Built-in |

| Best For | K8s-native teams | Experiment tracking | AWS-only shops | GCP-only shops |

SquareOps recommends open-source, Kubernetes-native toolchains to avoid vendor lock-in. We often combine Kubeflow for orchestration with MLflow for experiment tracking, Ray for distributed training, and KServe for model serving.

How We Build Your MLOps Platform

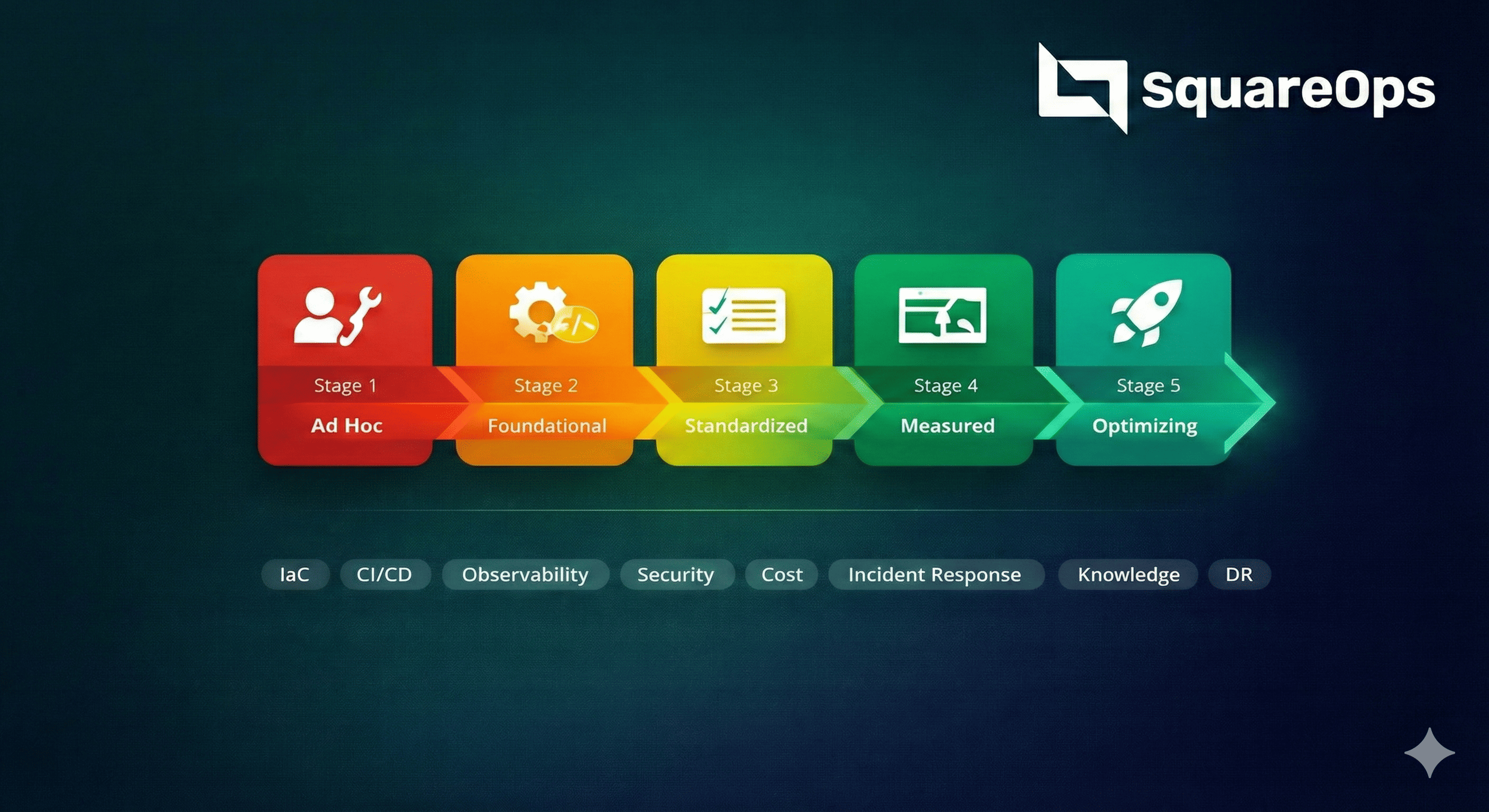

A structured approach to ML infrastructure that delivers your first model to production within 4 weeks and scales from there.

We implement incrementally — starting with the highest-impact pipeline and expanding to cover your full ML lifecycle.

ML Workflow Assessment

Audit current ML workflows, data pipelines, model inventory, and infrastructure. Identify bottlenecks between experimentation and production deployment.

Infrastructure Design

Design GPU-optimized Kubernetes clusters, storage architecture for datasets and artifacts, and networking for model serving endpoints.

Pipeline & Registry Build

Implement ML pipelines, experiment tracking, model registry, and feature stores. Integrate with your existing CI/CD workflows for model promotion.

Model Deployment Setup

Configure model serving infrastructure with autoscaling, A/B testing, canary deployments, and rollback capabilities. First model live in production.

Monitoring & Retraining

Deploy model monitoring for drift detection, prediction quality, and latency. Set up automated retraining triggers and continuous improvement loops.

Ready to productionize your ML models?

Get a free MLOps maturity assessment and AI infrastructure roadmap from our ML platform engineers.

AI Infrastructure for Every Stage

From GPU-accelerated training clusters to cost-optimized inference endpoints, we design AI infrastructure that scales with your ML workloads and organizational maturity.

Start Your MLOps JourneyStartups

Single GPU, MLflow experiment tracking, basic pipelines, and managed model serving to ship your first ML feature fast

Scale-ups

Multi-GPU training, Kubeflow pipelines, A/B model serving, feature stores, and automated retraining for growing model fleets

Enterprise

Multi-cluster GPU pools, compliance-ready pipelines, model governance, dedicated SRE support, and cost attribution per team

GenAI / LLM

vLLM/TGI serving, RAG pipelines with vector databases, fine-tuning infrastructure, H100 clusters, and inference cost optimization

Who Needs MLOps Services?

ML infrastructure services are essential for any organization deploying models to production — whether it's a single recommendation engine or a fleet of LLMs.

Fraud Detection & Risk Scoring

Real-time inference for transaction fraud models, credit scoring, and anomaly detection with strict latency requirements.

How We Help

Low-latency model serving, A/B testing for model updates, and compliance-ready pipelines with full audit trails.

Diagnostic Models & HIPAA

Medical imaging, NLP for clinical notes, and patient risk prediction models that require HIPAA-compliant infrastructure.

How We Help

HIPAA-compliant ML pipelines, data encryption at rest and in transit, and model versioning with complete lineage.

Recommendations & Demand Forecasting

Recommendation engines, search ranking, dynamic pricing, and demand forecasting models that directly impact revenue.

How We Help

Real-time feature stores, A/B model serving for recommendations, and auto-scaling inference during traffic spikes.

ML-Powered Product Features

AI/ML features embedded in SaaS products — smart search, content generation, automated workflows, and predictive analytics.

How We Help

Multi-tenant model serving, feature flags for ML experiments, and unified pipelines for training and inference.

LLM Fine-Tuning & RAG Pipelines

Building products on top of foundation models that require GPU clusters, vector databases, and cost-efficient inference at scale.

How We Help

H100/A100 GPU provisioning, vLLM serving, RAG with pgvector/Weaviate, and inference cost optimization with quantization.

Real-Time Inference at the Edge

Computer vision, sensor fusion, and real-time decision models for robotics, autonomous vehicles, and industrial IoT.

How We Help

Edge deployment with ONNX/TensorRT, model compression, CI/CD for edge models, and centralized monitoring.